This weekend I attended the Quantified Self conference in Amsterdam. The number of participants was just short of 300 of which 90(!) people were involved in some way in a session. They call this a “carefully curated unconference”: they started by checking out all the registrants and then connecting to them if they look interesting. Fast Moving Targets created some videos about the event. I am always interested in the commercial parties who think they have enough affinity with the topic to sponsor an event. In this case the three major sponsors were BodyMedia, FrieslandCampina, Dreamboard. Intel, Autodesk, 23andMe and Scanadu. Gary Wolf, a very thoughtful and reflective speaker, opened the conference by talking about the Quantified Self as a movement and its three central questions:

- What did you do?

- How did you do it?

- What did you learn?

Mood, Emotion, and Meaning

Jon Cousins from Moodscope (with a funny Twitter pic) and Robin Barooah from Sublime.org talked about mood. Jon started off by talking about the large number of people in our society who have a mental disorder, but it is important to be aware of your mood even if you don’t have mental issues. Emotion is something that changes very fast, whereas temperament changes much slower. Mood sits somewhere in the middle of that. Jon shared his own mood story and how he started to track his own depressive moods. He created a card-based scoring system and had a friend who wanted to see his “scores” daily. This immediately changed his mood for the positive. Moodscope now creates graphs like the following:

and can create Word clouds on the basis of your good and bad days:

Robin then talked about the stress he experienced in 2008, the most painful year of his adult life. He was at a point where he experienced real paranoia. He was aware, but couldn’t control it, very close to being psychotic. He started to meditate to help bring his stress levels down. He used an iPhone app to record his meditation practice. Next, he started to share his mood with a friend through things like Google Calendar and Dropbox. These quickly morphed into journal entries. He found out that the number of meditation minutes per day reflected the number of mood entries per day. He would see it as his ability to connect with the world. It is a signal about his whole life.

QS as a Catalyst for Learning?

I personally hosted this break-out conversation. As I was very busy facilitating I couldn’t really take notes during the session, instead I will share my preparations and questions that I wanted to talk about.

A simple way of describing how “learning” works is as a two-step cyclical process:

- Do something that you have not done before

- Reflect on what happened

Can quantifying yourself speed up this cyclical process? In which ways? Examples? Will you be more or less daring if you can see your past failures/patterns?

I’ve said before that the costs of self-tracking will be so low that not measuring yourself contineously will be considered “irresponsible”.

Will there be a next wave of measing cognitive processes rather than physical aspects? What other things can be measured but attention? What is the modern day version of Ebbinghaus’ Forgetting Curve? (1885)

Quite a while back Danny Hillis wrote about Aristotle:

Imagine that this tutor program can get to know you over a long period of time. Like a good teacher, it knows what you already understand and what you are ready to learn. It also knows what types of explanations are most meaningful to you.

Which services already give me insight into what I have studied? Why isn’t Amazon giving me a temporal word cloud? What kind of data could MOOCs deliver?

David Wiley has written the following:

One can easily imagine submitting their usernames for Google Web History, Facebook, Twitter, Delicious, Blogs, Google Reader, YouTube, etc. IN PLACE OF taking a four hour high stakes exam like the ACT or GRE. Why make a high stakes decision based on a few hundred data points generated in one morning (when you could be sick, distracted, etc.) when you could get 1,000,000 data points generated over three years?

Could certification become automatic and data based? Why?

I have been very interested in the risks and externalities of “datasexualism” in the context of learning?

There are problems with quantifying yourself: forgetting is beneficial (has a natural function, lifelogging is incompatible with true nostalgia), the filter bubble is a real risk (only reading more of the news you’ve read before, positive feedback loops). Most importantly: many things aren’t quantifiable in any sensible way. Morozov write in To Save Everything, Click Here:

Perhaps this is how aesthetics was meant to end, with a bunch of enthusiastic devotees of the Quantified Self movement comparing notes on whether the nudes of Picasso or Degas generate longer erections.

What about equality? Morozov again:

If you are well and well-off, then self-tracking will make things better for you.

Lightning talks

These were very short talks with slides moving according to a set schedule. There was somebody who called self-tracking looking in a rearview mirror and told us we needed to start looking forward. He has created an app that helps you get into flow. Another guy showed us Momentoapp which I certainly would have tried if it wasn’t iOS only. We also had people talking about tracking Parkinson syndrome (in cooperation with the Cure Parkinson organization). Somebody pitched AchieveMint (“Life Rewarded”) which allows people to get real-life rewards for activities that you tracking their healthy behaviour and aims to create “a market” for healthy behaviour. From my perspective: yet another thing that doesn’t belong on a market. An Intel UX designer/researcher talked about using biomimicry as a way to present data.

Surprises from 4 years of tracking books read

Rajiv Mehta talked about his four years of tracking reading books. I was interested because I do the same. He used a nice way of graphing created from the covers of the books. He saw that the light junky reading was crowding out the substance only after he started to analyze the list.

Activity Trackers

This session discussed the different activity trackers that are currently on the market. It was led by Michael Kazarnowicz who has used all these devices (at the same time) for at least ten days. We discussed the pros and cons of the Moves App, the Nike Fuelband, the Fitbit, the Basis, the Bodymedia FIT and the Jawbone UP. Somebody else mentioned ActiveLink which is rebranding of the DirectLife. Michael also quickly showed the LUMOback sensor that helps improve your posture.

To me the interesting thing is what platform will integrate the date of all these trackers. Michael mentioned TicTrac which seems to be worth a look.

All the information that Michael shared is also available on his blog.

The Self in Data

Sara Marie Watson is a researcher at the Oxford Internet Institute and is interested in what it means to have this data about ourselves. She showed the large dichotomous narrative of “big data is amazing” on the one hand and “oh no, they no so much about you” on the other hand. She has done quantitative research into how the Quantifief Self movement talks about data. Often this talk is about the technical side of things: ease of capture, portability, flexibility, analysis and correlation, scientific methods, legibility and visualization and epistemology.

She wanted to talk about the following questions:

- How is our identity, sense of self tied to our data?

- What are some of our assumptions about what data van do

- What are the metaphors we use to describe how we relate to and use our data?

- What are the limitations of data? What can’t data tell us about ourselves?

- What does it mean to have a digital, numerical representation of ourselves in data?

These questions led to a lot of discussion about multiple selves, whether each device creates a “new self”, how the self is sociologically constructed, the Hawthorne effect (again), numbers as a statement of authority (so giving individuals the ability to say something with authority about yourself) and whether QS isn’t more like art. There were statements like “The macroscope can be seen as the first post-mirror metaphor.” This seemed to be the ultimate session for anthropologists and QS-philosophers who wanted to meet self-tracking hipsters. Fascinating stuff.

One person coined a new piece of jargon: quantifying yourself allows you to disaggregate yourself (a way of quantitative auto-biography), this is necessary because the whole self (the thing that we label with our name) is just too integrated.

QS & Medicine: Caring for Ourselves

Frontiers in self-tracking was a talk about the blurring lines between self-tracking and health by Eri Gentry. She talked about things like Piddle and Ucheck, both urine analysers, or the AliveCor for using your iPhone to create an ECG. She mentioned Max Little who created a 30 second phone call test that can diagnose Parkisons:

[youtube=http://www.youtube.com/watch?v=HWsehvUkI-c]

And talked about apps for measuring eye sight and hearing loss.

Genetic tests are becoming available to the public. Not just 23andme (at least 30% of the people in the audience have done that) but also uBiome which is sequencing microbiomes.

Next was a talk about arterial stiffness, the forgotten factor of in cardiovascular health (“sponsored” by FrieslandCampina?!). Arterial stiffness is a strong independent predictor of heart attack and stroke. The speaker talked about how to measure arterial stiffness and how you can keep your blood vessels in good condition (by for example eating cheese, surprise surprise).

Sara Riggare (“Not patient, but im-patient”) talked about how she optimized her Parkinson’s medication. She gets to spend 1 hour of the year in health care, the 8765 other hours are self-care for her. She says that having a chronic disease means lifelong learning. She experimented on herself by measuring her medicine intake and doing a tapping test on her phone at regular intervals. She then starting “playing” with when she would take her medication. Her methodology needed two different apps and lots of plotting in Excel. This wasn’t easy enough for other Parkinson sufferers to use. She managed to find funding to develop an app that could do this.

Life logging with Memoto

The day finished with a town hall meeting in which we discussed the implications of people wearing life logging devices. A few people wore a Memoto camera (it takes a picture every 30 seconds) for the day. These discussions were mainly about privacy. We tried to talk about how it felt to be recorded. The social norms for these kind of cameras are still in development, but are also in place in some form.

What Makes Data Open?

David Andre talked about self-centered open data. He is a scientist who worked at BodyMedia since 2002 making a lot of body tracking devices. In 2008 he started a hedge fund because he found out that tracking finances is in many ways similar to tracking people. With open data there is a monitoring stack and some key features: play at any level, replace modules and use other’s work, scaffold, build, mix and match and use machine learning and big data. Often there are problems with APIs: no access to the firmware, often you only get post-processed data, the optimization might have caused inconsistencies and often you need to be a business partner to use an API well. Even if we were able to pull all the data together, we will still have something that is analogous to the tower of Babel. The challenges are:

- Different time models or alignments

- Different semantics (e.g. blood test versus weight versus heart-rate versus sleep)

- Vendors change hardware and software

- Doing analysis (Big Data) on the merged data is harder than it seems

We also need to realize that people aren’t just a collection of minutes.

Solutions for a way forward are:

- To have a data model that has meta-data about the data that you are looking at (protocols, timing, version numbers, etc)

- Use derivation trees that show how the raw data is modified

- Use data models that are truly self-centered, we need to enable tools that are as useful for self-centered time series data as spreadsheets were for tabular data

David then joined a panel with Marc Rijnveld (from Rotterdam Community Solutions, providing “tools for self-organization) and Anne Wright (from BodyTrack, a set of open source tools to capture and explore data on activities, environmental and food inputs, and health status over time, now working together with Fluxtream). They had a short discussion about the services that sit in between the user and their multiple devices (products like Singly). Anne mentioned Open mHealth an open software architecture for mobile health integration. There was a good discussion about the business models for vendors to open up their data, how weird it is that timestamps are still an issue in this community and the allure of starting projects to solve all these problems in one go.

Some interesting links

In the Twitter stream and via Dorien Zandbergen I found a few interesting articles about the Quantified Self online:

- Quantifying your Self? Need a human-centered data structure?

- The Woman vs. The Stick: Mindfulness at Quantified Self 2012

- The Quantified Self Movement is not a Kleenex

- Quantified Self as Soft Resistance

Encountering the Unquantified Other

Dorien Zandbergen and Zane Kripe hosted this session which explored the implicit ideologies that quantified selvers have versus the quantified other. Dorien sketched how quantifying ourselves is actually quite a bit older than we often like to think. She asked us a question: to what extend do you feel comfortable to talk about your quantified self practices in each of the following contexts: work, family, friends, public space. We then looked at what people felt when they shared the fact that they quantify themselves and their data. One participant mentioned how uncomfortable he is sharing his sleeping data, even with his family (“you have no right to be grumpy, you have slept for eight hours!”).

I shared my perspective on how when sensors become completely ubiquitous and unobtrusive (in a few years) the perspective will actually shift: you will be irresponsible if you don’t measure yourself continuously. It will be seen as if you don’t care about yourself, basically like not brushing your teeth.

Lightning talks on Sunday

Again a set of lightning talks. I tried to mainly capture the people and the links:

- Anne Wright talked about successful strategies for data aggregation. She works with a system called Fluxtream that can be bring together all kinds of data from different services using connectors (think emails, calendars, activity trackers, image sharing accounts, etc.). This data is then shown on an explorable timeline. There also is FluxtreamCapture iOS app and they are working on creating a spectral view.

- Papadopoulos Homer talked about USEFIL which “aims to address the gap between technological research advances and the practical needs of elderly people by developing advanced but affordable in-home unobtrusive monitoring and web communication solutions [and] intends to use low cost “off-the-shelf” technology to develop immediately applicable services that will assist the elderly in maintaining their independence and daily activities.”

- Carlos Rizo is a fan of mindfulness. He explaines how he sets a password to unlock his phone regularly and embeds behavioural cues in that password: things like: reviewtodo, drinkwater, smilemore, remembertosleep, givethanks, breathnow, micromeditate, etc. Nice idea!

- Matteo Lai works for Empatica who do emotional tracking hard- and software at a personal level and in real time. They are specifically interested in following stress (they developed a stress sensor). They did an experiment where they tracked a whole team rather than invidivuals on their stress levels.

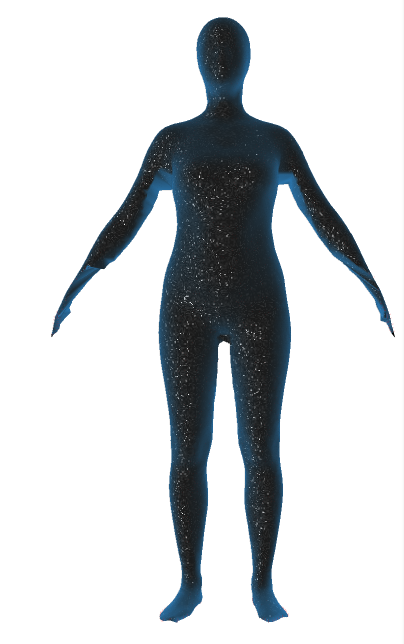

- Nell Watson from Poikos talked about their technology to measure the body in 3D with a smartphone based on two snapshots (from the front and from the side). They are a platform with an SDK, an API and a white-label program. Try out their iOS app that gets your clother measurements: FlixFit. They are actually creating a global anthropological database (see QSU.me.

- Marco Altini works for imec talked about how it isn’t about being fat, but about being fit, so devices should measure fitness and not fatness. He is working on the next generation of trackers that should enable this.

- Eric Jain who works on Zenobase which pulls in data from different sources like the Fitbit, Withings scale or Foursquare. All data is put in automatically as much as possible, so no tedious entry forms. The aim is that it can track anything.

- Stan James had his webcam take pictures of himself every 30 minutes. He did this for a full year and tagged all the pictures manually so that he could see how often he works on his laptop in his bed or how often he works in coffee shops, touches his face or is on the phone. Read more about his Lifeslice project here.

QS Security and Privacy

James Burke started the conversation by mentioning two articles: Hackers Could Access Pacemakers From A Distance And Deliver Deadly Shocks and Fitbit users are unwittingly sharing details of their sex lives with the world. He also mentioned the by now infamous Target example.

We then discussed things like consent and the terms of service of a product. There was one participant in the session who is a diabetic and shares data with the provider of their medicine. She doesn’t like that because she isn’t always the “best patient”. James put up a picture of Google glass and we talked about who owns the data on what that device captures and told us how the NSA is currently recording all communications in the USA which led to a debate about the (false) dichotomy between security and privacy.

We talked about the changed EU Data protection directive with the following key changes:

- A single set of rules on data protection, valid across the EU. Unnecessary administrative requirements, such as notification requirements for companies, will be removed. This will save businesses around €2.3 billion a year.

- Instead of the current obligation of all companies to notify all data protection activities to data protection supervisors – a requirement that has led to unnecessary paperwork and costs businesses €130 million per year, the Regulation provides for increased responsibility and accountability for those processing personal data.

- For example, companies and organisations must notify the national supervisory authority of serious data breaches as soon as possible (if feasible within 24 hours).

- Organisations will only have to deal with a single national data protection authority in the EU country where they have their main establishment. Likewise, people can refer to the data protection authority in their country, even when their data is processed by a company based outside the EU. Wherever consent is required for data to be processed, it is clarified that it has to be given explicitly, rather than assumed.

- People will have easier access to their own data and be able to transfer personal data from one service provider to another more easily (right to data portability). This will improve competition among services.

- A ‘right to be forgotten’ will help people better manage data protection risks online: people will be able to delete their data if there are no legitimate grounds for retaining it.

- EU rules must apply if personal data is handled abroad by companies that are active in the EU market and offer their services to EU citizens.

- Independent national data protection authorities will be strengthened so they can better enforce the EU rules at home. They will be empowered to fine companies that violate EU data protection rules. This can lead to penalties of up to €1 million or up to 2% of the global annual turnover of a company.

- A new Directive will apply general data protection principles and rules for police and judicial cooperation in criminal matters. The rules will apply to both domestic and cross-border transfers of data.

Joshua Kauffman walked into the room and dropped a little bomb: he said he had just patented a Google Glass app that makes use of the public database of images from convicts and allows you to protect your children from pedophiles and might help you in your business interactions. People looked shocked after which he revealed that of course he had not done that, but that the technology is there and that it would be trivial to make. We had a discussion about the sociological implications of technology. We touched on Paul Virillio’s idea that every technological development brings a new accident.

Tracking Subjective States

Dave Marvit showed us a slide about all the body data that can currently be captured by consumer grade devices. All of these are objective states. Ubiqutous continuous monitoring for many of these states is coming very soon. Dave then showed a prototype device that he is involved with that can measure stress levels (mainly on the basis of heartrate variability). They can map a person’s stress level with their GPS data (visualizing your pre-exit stress for example). He suggested we could aggregate subjective data, basically turning people into sensors. What would happen if we equipped all of Tokyo’s subway drivers with a stress sensor? What could it tell us about where the dangerous spot in the subway network are?

One participant in the session mentioned Christian Nold’s Bio Mapping project. Another mentioned how the quantified self movement is all about focusing intention.

Another “subjective” (maybe affective is a better word) state that people might want to track is for example drowsiness. Dave said that he wouldn’t mind it if cars would refuse to drive when somebody is too drowsy to drive.

Natasha Schüll desribed a little bit how casinos are measuring their customers (at slot machines for examples) so they can give them the right stimulus at the right time. She has written a book about the topic titled Addiction by Design: Machine Gambling in Las Vegas.

Somebody from the BBC mentioned the research projects he is involved with: basically a way to change the programming on the basis of what the TV (and other sensors) know about the people watching it (made us think of George Orwell!).

Life-Logging at Different Speeds

Cathal Gurrin summarized seven years of life logging. He wore a sensecam for seven years. Lifelogging is the automatic multimodal sensing of real world life experience and storing that in an archive. He tried to quantify as much as possible and gathered as much as he could. Lifelogging is very much about looking out. He gathered about 2 million photographs a year, 4 million GPS coordinates, screenshots of the computers he is using, etc. He needed software to process all this data. He noticed that the longer he wore the camera, the more valuable the data became for him. Currently he uses the Autographer. Over the years he learned a few things:

- No manual input please

- We do about 30 different things every day, these can be segmented in different moments automatically

- Lot’s of moments are insignificant, but some are very meaningful

- He doesn’t look at the images, software does (has an impact on privacy)

- The challenge is to extract meaning

- It is possible to design privacy into the systems

- Only 4% of the images are ‘good’, 40% are useless, 56% are for software processing

- People don’t mind the camera, but they do care about the audio

- You get used to it very quickly and stop acting differently after a few days

- There is no control of capture, so it captures embarrasing stuff

- Browsing or simple search is very hard when the data grows, you need advanced search

- The data is used for reflection, recall (validation), retrieval (finding something specific) and reminiscence (e.g. sharing with friends) (the information needs as identified by Microsoft, you always want to remember future intentions)

- It requires a significant investment in software (machine learning on images)

What he wants to do is identify what the person is doing and do this automatically and segment the data in activity types. You can then identify long time trends and activities. They have currently build some real world prototypes. They have created visual diaries, food logs (automatic identification of food), an installation called the colour of life (“seeing your life in one glance” on a colour chart), a thing called “What I’ve Seen” which allow you to find out, based on open source machine vision tools, when you’ve seen that object before (a marketing dream: when did a person see the Heineken logo), device personalities (“a conversation with the coffee machine”). They have now build a platform called Senseseer that unifies these things.

Next up was Buster Benson who is both a QS fanatic and sceptic. He likes to live publicly and sees privacy as a side-effect of not being connected. Check out his public beliefs on Github. He showed us a few of his projects like 750words and Health Month.

He talked to us about his project “8:36pm” which he has done for five years. He takes a photo at 8:36PM every day and captions the photo. He now has about 1785 photos with about 98% coverage. It is about is uncurated self. He is a fan of lifelong projects because they are so impossible.

Miscellaneous

There were a few sessions I wasn’t able to attend. I would have been interested in the app for good posture created by the people behind the Gokhale method and would have loved to see more about the Human Memome project.

After hearing Stan James talk at lunch I quickly wrote a little bash script that would capture an image of me behind my webcam and timestamp it. I then used cron to run the script every 10 minutes. I’ll leave it running for a little while to see what I can learn from that. For now I’ve mostly learned that my laptop has a very crappy camera (although I managed to fix the discoloration).

thanks – very helpful way of catching up with the QS conference

You wrote a great summary, thanks!

Would you mind sharing the bash script and how you set up cron to make your camera take the pictures? That would be greatly appreciated.

Hey Lisa, I run Xubuntu Linux and have installed a little program from the repositories called “streamer”. Next I wrote the following little bash script and called it webcamcapture.sh (and made sure it was executable by running “chmod +x webcamcapture.sh”):

#!/bin/bash

# Add the date and time to a variables

now=`date +"%Y%m%d-%H%M%S"`

streamer -c /dev/video0 -s 640x480 -o ~/$now.jpeg

Obviously you might want to change the location where it is stored, now it stores it in your home directory.

Finally I edited the cron tab by typing “crontab -e” and making sure the following line was added:

*/15 * * * * ~/webcamcapture.sh

You can change the 15 to 30 if you want a picture once every 30 minutes instead of every 15 minutes like I’ve set it up.

Good luck!

Hans

Thank you; this works perfectly on my Linux Mint partition; will try to set it up on my Mac too.

Wow. A great and impressive article about the QS conference. Thanx!