These are my notes and reflections for the second and third days of the 10th International Conference on Knowledge Management and Knowledge Technologies (I-KNOW 2010).

Another appstore!

Rafael Sidi from Elsevier kicked of the second day with a talk titled “Bring in ‘da Developers, Bring in ‘da Apps – Developing Search and Discovery Solutions Using Scientific Content APIs” (the slightly ludicrous title was fashioned after this).

He opened his talk with this Steve Ballmer video which, if I was the CIO of any company, would seriously make me reconsider my customer relationship with Microsoft:

[youtube=http://www.youtube.com/watch?v=8To-6VIJZRE&rel=0]

(If you enjoyed that video, make sure you watch this one too, first watch it with the sound turned off and only then with the sound on).

Sidi is responsible for Elservier’s SciVerse platform. He has seen that data platforms are increasingly important, that there is an explosion of applications and that people work in communities of innovation. He used Data.gov as an example: it went from 47 sources to 220,000+ sources within a year’s time and has led to initiatives like Apps for America. We need to have an “Apps for science” too. Our current scientific platforms make us spend too much time gathering instead of analysing information and none of them really understand the user’s intent.

The key trends that he sees on the web are:

- Openness and interoperability (“give me your data, my way”). Access to APIs helps to create an ecosystem.

- Personalization (“know what I want and deliver results on my interest”). Well known examples are: Amazon, Netflix and Last.fm

- Collaboration & trusted views (“the right contacts at the right time”). Filtering content through people you trust. “Show me the articles I’ve read and show me what my friends have right differently from me”. This is not done a lot. Sidi didn’t mention this but I think things like Facebook’s open API are starting to deliver this.

So Elsevier has decided to turn SciVerse, the portal to their content, into a platform by creating an API with which developers can create applications. Very similar to Apple’s appstore this will include a revenue sharing model. They will also nurture a developers community (bootstrapping it with a couple of challenges).

He then demonstrated how applications would be able to augment SciVerse search results, either by doing smart things with the data in a sidebar (based on aggregated information about the search result) or by modifying a single search result itself. I thought it looked quite impressive and thought it was a very smart move: scientific publishers seem to be under a lot of pressure from things like Open Access and have been struggling to demonstrate their added value in this Internet world. This could be one way to add value. The reaction from the audience was quite tough (something Sidi already preempted by showing an “I hate Elsevier”-tweet in his slides). One audience member: “Elsevier already knows how to exploit the labour of scientists and now wants to exploit the labour of developers too”. I am no big fan of large publisher houses, but thought this was a bit harsh.

Knowledge Visualization

Wolfgang Kienreich demoed some of the knowledge visualization products that the Know-Center has developed over the years. The 3D knowledge space is not available through the web (it is licensed to a German encyclopedia publisher), but showed what is possible if you think hard about how a user should be able to navigate through large knowledge collections. Their work for the Austrian Press Agency is available online in a “labs” evironment. It demonstrates a way of using faceted search in combination simple but insightful visualizations. The following example is a screenshot showing which Austrian politicians have said something about pensions.

I have only learned through writing this blog post that Wolfgang is interested in the Prisoner’s Dilemma. I would have loved to have talked to him about Goffman’s Expression games and what they could mean for the ways decisions get made in large corporations. I will keep that for a next meeting.

Knowledge Work

This track was supposed to have four talks, but one speaker did not make it to the conference, so there were three talks left.

The first one was provocatively titled “Does knowledge worker productivity really matter?” by Rainer Erne. It was Drucker who said that is used to be the job of management to increase the productivity of manual labour and that is now the job of management to make knowledge workers more productive. In one sense Drucker was definitely right: the demand for knowledge work is increasing all the time, whereas the demand for routine activities are always going down.

Erne’s study focuses on one particular part of knowledge workers: expert work which is judgement oriented, highly reliant on individual expertise and experience and dependent on star performance. He looked at five business segments (hardware development, software development, consulting, medical work and university work) and consistently found the same five key performance indicators:

- business development

- skill development

- quality of interaction

- organisation of work

- quality of results

This leads Erne to belief that we need to redefine productivity for knowledge workers. There shouldn’t just be a focus on quantity of the output, but more on the quality of the output. So what can managers do knowing this? They can help their experts by being a filter, or by concentrating their work for them.

This talk left me with some questions. I am not sure whether it is possible to make this distinction between quantitative and qualitative output, especially not in commercial settings. The talk also did not address what I consider to be the main challenge for management in this information age: the fact that a very good manual worker can only be 2 or maybe 3 times as productive as an average manual worker, whereas a good knowledge worker can be hundreds if not thousands times more productive than the average worker.

Robert Woitsch talk was titled “Industrialisation of Knowledge Work, Business and Knowledge Alignment” and I have to admit that I found it very hard to contextualize what he was saying into something that had any meaning to me. I did think it was interesting that he really went in another direction compared to Erne as Woitsch does consider knowledge work to be a production process: people have to do things in efficient ways. I guess it is important to better define what it is we actually mean when we talk about knowledge work. His sites are here: http://promote.boc-eu.com and http://www.openmodels.at.

Finally Olaf Grebner from SAP research talked about “Optimization of Knowledge Work in the Public Sector by Means of Digital Metaphors”. SAP has a case management system that is used by organisations as a replacement for their paper based system. The main difference between current iterations of digital systems and traditional paper based systems is that the latter allows links between the formal case and the informal aspects around the case (e.g. a post-it note on a case-file). Digital case management systems don’t allow informal information to be stored.

So Grebner set out to design an add-on to the digital system that would link informal with formal information and would do this by using digital metaphors. He implemented digital post-it notes, cabinets and ways of search and his initial results are quite positive.

Personally I am bit sceptical about this approach. Digital metaphors have served us well in the past, but are also the cause for the fact that I have to store my files in folders and that each file can only be stored in one folder. Don’t you lose the ability to truly re-invent what a digital case-management system can do for a company if you focus on translating the paper world into digital form? People didn’t like the new digital system (that is why Grebner was commissioned to do make his prototype I imagine). I believe that is because it didn’t allow the same affordances as the paper based world. Why not focus on that first?

Knowledge Management and Learning

This track had three learning related sessions.

Martin Wolpers from the Fraunhofer Institute for Applied Information Technology (FIT) talked about the “Early Experiences with Responsive Open Learning Environments”. He first defined each of the terms in Responsive Open Learning Environments:

Responsive: responsiveness to learners’ activities in respect to learning goals

Open: openness for new configurations, new contents and new users

Learning Environment: the conglomerate of tools that bring together people and content artifacts in learning activities to support them in constructing and processing information and knowledge.

The current generation of Virtual Learning Environments and Learning Management Systems have a couple of problems:

- Lack of information about the user across learning systems and learning contexts (i.e. what happens to the learning history of a person when they switch to a different company?)

- Learners cannot choose their own learning services

- Lack of support for open and flexible personalized contextualized learning approach

Fraunhofer is making an intelligent infrastructure that incorporates widgets and existing VLE/LMS functionality to truly personalize learning. They want to bridge what people use at home with what they use in the corporate environment by “intelligent user driven aggregation”. This includes a technology infrastructure, but also requires a big change in understanding how people actually learn.

They used Shindig as the widget engine and Opensocial as the widget technology. They used this to create an environment with the following characteristics:

- A widget based environment to enable students to create their own learning environment

- Development of new widgets should be independent from specific learning platforms

- Real-time communication between learners, remote inter-widget communication, interoperable data exchange, event broadcasting, etc.

He used a student population in China as the first people to try the system. It didn’t have the uptake that he expected. They soon realised that this was because the students had come to the conclusion that use or non-use of the system did not directly affect their grades. The students also lacked an understanding of the (Western?) concept of a Personal Learning Environment. After this first trial he came to a couple of conclusions. Some where obvious like that you should respect the cultural background of your students or that responsive open learning environments create challenges on the technology and the psycho-pedagogical side. Other were less obvious like that using an organic development process allowed for flexibility and for openly addressing emerging needs and requirements and that it makes sense to enforce your own development to become the standard.

For me this talk highlighted the still significant gap that seems to exist between computer scientists on the one side and social scientists on the other side. Trying out Personal Learning Environments in China is like sending CliniClowns to Africa: not a good idea. Somebody could have told them this in advance, right?

Next up was a talk titled “Utilizing Semantic Web Tools and Technologies for Competency Management” by Valentina Janev from the Serbian Mihajlo Pupin Institute. She does research to help improve the transferability and comparability of competences, skills and qualifications and to make it easier to express core competencies and talents in a standardized machine accessible way. This was another talk that was hard for me to follow because it was completely focused on what needs to happen on the (semantic) technical side without first giving a clear idea of what kind of processes these technological solutions will eventually improve. A couple of snippets that I picked up are that they are replacing data warehouse technologies with semantic web technologies, that they use OntoWiki a semantic wiki application, that RDF is the key word for people in this field and that there is thing called DOAC which has the ambition to make job profiles (and the matching CVs) machine readable.

The final talk in this track was from Joachim Griesbaum who works at the Institute of Information Science and Language Technology. The title of his talk must have been the longest in the conference: “Facilitating collaborative knowledge management and self-directed learning in higher education with the help of social software, Concept and implementation of CollabUni – a social information and communication infrastructure”, but as he said: at least it gives you an idea what it is about (slides of this talk are available here, Griesbaum was one of the few presenters that made it clear where I could find the slides afterwards).

A lot of social software in higher education is used in formal learning. Griesbaum wants to focus on a Knowledge Management approach that primarily supports informal learning. To that end he and his students designed a low cost (there was no budget) system from the bottom up. It is called CollabUni and based on the open source e-portfolio solution (and smart little sister of Moodle) Mahara.

They did a first evaluation of the system in late 2009. There was little self-initiated knowledge activity by the 79 first year students. Roughly one-third of the students see an added value in CollabUni and declare themselves ready for active participation. Even though the knowledge processes that they aimed for don’t seem to be self-initiating and self-supporting, CollabUni still shows and stands for a possible low-cost and bottom-up approach towards developing social software. During the next steps of their roll out they will pay attention to the following:

- Social design is decisively important

- Administrative and organizational support components and incentive schemes are needed

- Appealing content (for example an initial repository of term papers or theses)

- Identify attractive use cases and applications

Call me a cynic, but if you have to try this hard: why bother? To me this really had the feeling of a technology trying to find a problem, rather than a technology being the solution to the problem. I wonder what the uptake of Facebook is with his students? I did ask him the question and he said that there has not been a lot of research into the use of Facebook in education. I guess that is true, but I am quite convinced there is a lot use of Facebook in education. I believe that if he had really wanted to leverage social software for the informal part of learning, he should have started with what his students are actually using and try to leverage that by designing technology in that context, instead of using another separate system.

Collaborative Innovation Networks (COINs)

The closing keynote of the conference was by Peter A. Gloor who currently works for the MIT Center for Collective Intelligence. Gloor has written a couple of books on how innovation happens in this networked world. Though his story was certainly entertaining I also found it a bit messy: he had an endless list of fascinating examples that in the end supported a message that he could have given in a single slide.

His main point is that large groups of people behave apparently randomly, but that there are patterns that can be analysed at the collective level. These patterns can give you insight into the direction people are moving. One way of reading the collective mind is by doing social network analysis. By combining the wisdom of the crowd with the wisdom of groups of experts (swarms) it is possible to do accurate predictions. One example he gave was how they had used reviews on the Internet Movie Database (the crowd) and on Rotten Tomatoes (the swarm) to predict on the day before a movie opens in the theatres how much the movie will bring in in total.

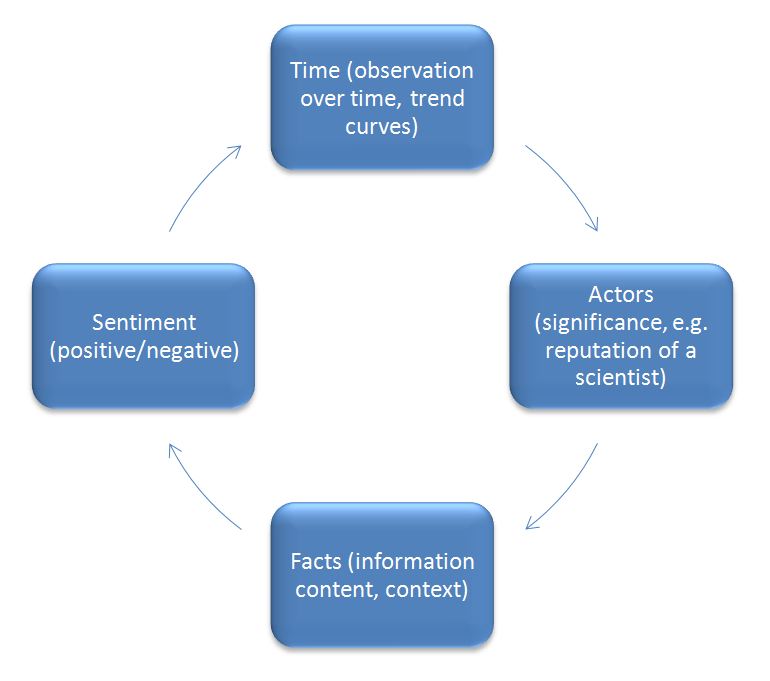

The process to do these kinds of predictions is as follows:

This kind of analysis can be done at a global level (like the movie example), but also in for example organizations by analysing email-archives or equipping people with so called social badges (which I first read about in Honest Signals) which measure who people have contact with and what kind of interaction they are having.

He then went on to talk about what he calls “Collaborative Innovation Networks” (COINs) which you can find around most innovative ideas. People who lead innovation (think Thomas Edison or Tim Berners-Lee) have the following characteristics:

- There are well connected (they have many “friends”)

- They have a high degree of interactivity (very responsive)

- They share to a very high degree

All of these characteristics are easy to measure electronically and thus automatically, so to find COINs you find the people who score high on these points. According to Gloor high-performing organizations work as collaborative innovation networks. Ideas progress from Collaborative Innovation Network (COIN) to Collaborative Learning Network (CLN) to Collaborative Interest Network (CIN).

Twitter is proving to be a very useful tool for this kind of analysis. Doing predictions for movies is relatively easy because people are honest in their feedback. It is much harder for things like stock, because people game the system with their analysis. Twitter can be used (e.g. by searching for “hope”, “fear” and “worry” as indicators for sentiment) as people are honest in their feedback there.

Finally he made a refence in his talk to the Allen curve (the high correlation between physical distance and communication, with a critical distance of 50 meters for technical communication). I am sure this curve is used by many office planners, but Gloor also found an Allen curve for technical companies around his university: it was about 3 miles.

Interesting Encounters

Outside of the sessions I spoke to many interesting people at the conference. Here are a couple (for my own future reference).

It had been a couple of years since I had last seen Peter Sereinigg from act2win. He has stopped being a Moodle partner and now focuses on projects in which he helps global virtual teams in how they communicate with each other. There was one thing that he and I could fully agree on: you first have to build some rapport before you can effectively work together. It seems like such an obvious thing, but for some reason it still doesn’t happen on many occasions.

Twitter allowed me to get in touch with Aldo de Moor. He had read my blog post about day 1 of this conference and suggested one of his articles for further reading about pattern languages (the article refers to a book on a pattern language for communication which looks absolutely fascinating). Aldo is a independent research consultant in the field of Community Informatics. That was interesting to me for two reasons:

- He is still actively publishing in peer reviewed journals and speaking at conferences, without being affiliated with a highly acclaimed research institute. He has written an interesting blog post about the pros and cons of working this way.

- I had never heard of this young field of community informatics and it is something I would like to explore further.

I also spent some time with Barend Jan de Jong who works at Wolters Noordhoff. We had some broad-ranging discussions mainly about the publishing field: the book production process and the information technology required to support this, what value a publisher can still add, e-books compared to normal books (he said how a bookcase says something about somebody’s identity, I agreed but said that a digital book related profile is way more accessible than the bookcase in my living room, note to self: start creating parody GoodReads accounts for Dutch politicians), the unclear if not unsustainable business model of the wonderful Guardian news empire and how we both think that O’Reilly is a publisher that seem to have their stuff fully in order.

Puzzling stuff

There were also some things at I-KNOW 2010 that were really from a different world. The keynote on the morning of the 3rd day was perplexing to me. Márta Nagy-Rothengass titled the talk “European ICT Research and Development Supporting the Expansion of Semantic Technologies and Shared Knowledge Management” and opened with a video message of Neelie Kroes talking in very general terms about Europe’s digital agenda. After that Nagy-Rothengass told us that the European Commission will be nearly doubling its investment into ICT to 11 billion Euros, after which she started talking about the “Call 5” of “FP7” (apparently that stands for the Seventh Framework Programme), the dates before which people should put their proposals in, the number of proposals received, etc., etc., etc. I am pro-EU, but I am starting to understand why people can make a living advising other people how best to apply for EU grants.

Another puzzling thing was the fact that people like me (with a corporate background) thought that the conference was quite theoretical and academic, whereas the researchers thought everything was very applied (maybe not enough research even!). I guess this shows that there is quite a schism between universities furthering the knowledge in this field and corporations who could benefit from picking the fruits of this knowledge. I hope my attendance at this great conference did its tiny part in bridging this gap.

Very helpful summary, thx, gives me a good sense of the many talks I missed.

Your final observation is exactly mine, talking to many academic researchers as well as practitioners at the conference. A lot of water will still need to flow through the Mur before this kind of research will truly have become actionable and, vice versa, before much ad hoc work in practice will get reflected upon more seriously.